Communication

Communication Reversing Academe’s Sometimes Perverse Incentives

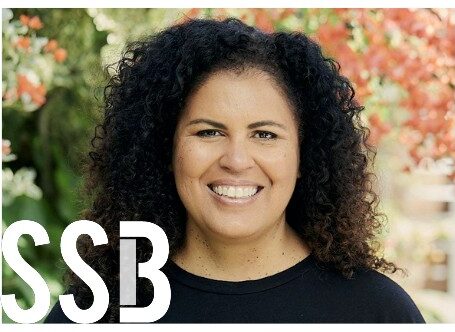

Full of people who didn’t publish – but also those who did, too. (Photo: NotFromUtrecht – Own work. Licensed under CC BY-SA 3.0 via Commons)

Sadly, the story is all-too familiar. But this is not to say that science is imperiled, only that we need to ensure the reward and support structures in academia promote the best practices rather than corner cutting.

This article by Virginia Barbour originally appeared at The Conversation, a Social Science Space partner site, under the title The Conversation. Read the “Publish or perish culture encourages scientists to cut corners”

Peer review is currently the primary tool we have for assessing papers prior to publication. Although it has its strengths, especially when overseen by skilled editors, it can’t pick up all instances of fraud or sloppy scientific practices.

In the past these errors may have lain hidden for many years, or never come to light. Now, post publication scrutiny is picking up more and more papers with questionable data. This is leading to corrections, or even retractions. Websites such as Retraction Watch have sprung up to document these retractions.

Peerless research

To non-academics, this might all seem rather surprising. Isn’t science governed by strict protocols for performing and reporting research?

Well, no. Unlike industrial processes, for example, which have standard operating procedures and oversight, science is usually organised locally. Expert laboratory heads typically have the responsibility for the oversight of their laboratories’ work.

Many laboratories work as part of larger collaborations, which may have their own checks and balances in place, as do the academic institutions to which they belong. Even so, ultimately the researchers and individual laboratories are responsible for their own work.

The medical sciences have developed their own standards of reporting studies, including clinical trials. But even these standards are not employed universally.

The system of rewards within science is possibly even more perplexing. Academia is a highly competitive profession. The basic training in science is a PhD, with more than 6,000 awarded each year in Australia alone, which is many more than can ever end up as career researchers, even at the lowest level.

The situation gets worse the more senior a researcher gets. According to a 2013 discussion document less than 5% of those who were originally awarded PhDs find permanent academic positions. Even these senior researchers rarely have permanent positions, but are instead expected to compete for funding every few years.

And the primary way academics compete is in the number of papers they publish in peer reviewed journals, especially the handful of what are considered to be top journals, such as Science, http://www.nature.com/link text and The Lancet.

Under pressure

Why does this all matter? Doesn’t this competition lead to selection of the best of the best in research and a faster pace of advancement of science? In fact, the reverse may be the case.

In a seminal paper published in 2005, provocatively titled Why Most Published Research Findings are False, John Ioannidis discussed a number of reasons why research may be unreliable. One finding was that papers in highly competitive areas were more likely to be false than papers in less competitive fields.

In 2014, the Nuffield Council on Bioethics probed these issues among UK researchers in a year long study. What they found was alarming.

Researchers stated that there was strong pressure on them to publish in a limited number of top journals, “resulting in important research not being published, disincentives for multidisciplinary research, authorship issues, and a lack of recognition for non-article research outputs”. Even worse was that the need to get into these top journals led to “scientists feeling tempted or under pressure to compromise on research integrity and standards.”

What can be done? Increasingly, groups of scientists are coming together to develop standards in reporting, conduct and reproducibility. Organisations such as the Committee on Publication Ethics (COPE), which I chair, advise editors on how to handle problem papers.

Perhaps most interestingly, a number of technological innovations have arisen that could lead to more reliable science, if adopted widely. Probably the most important innovation is that of Open Science, i.e. open access to research publications, and open access to the data and methodology that underpins those publications.

But we also need to develop ways to reward scientists who do make their publications, data and methodology open for scrutiny, and don’t just pursue publication in top journals.

Research data organizations, such as the Australian National Data Service (ANDS), are developing the infrastructure for systematic and standardized ways of linking to data, but as yet funders and institutions do not routinely reward such behavior.

In the end, science is a human endeavor. And like humans everywhere, those who work in it will do what they are rewarded for, for better or for worse. So we need to make sure those reward structures are encouraging good quality research, not the opposite.

![]()