Beating the Flawed Metric That Rules Science

In order to improve something, we need to be able to measure its quality. This is true in public policy, in commercial industries, and also in science. Like other fields, science has a growing need for quantitative evaluation of its products: scientific studies. However, the dominant metric used for this purpose is widely considered to be flawed. It is the journal impact factor.

The impact factor is a measure of how many times recent papers from a particular scientific journal are cited in other scientific papers. Journals with a high impact factor enjoy prestige. Scientists compete to publish their work there, because this boosts their reputation and funding opportunities. In order to be published in such journals, a paper needs to pass prepublication peer review, a process in which two to four anonymous scientists evaluate its quality.

This article by Nikolaus Kriegeskorte originally appeared at The Conversation, a Social Science Space partner site, under the title http://theconversation.com/how-science-can-beat-the-flawed-metric-that-rules-it-29606

Because the impact factor is based on the number of times all recent papers in a journal are cited, it is widely understood to provide a poor indication of the quality of each individual paper appearing in that journal. It is not just scientists, but also many journal editors and publishers who object to this metric. We have come to a point where the impact factor is almost universally rejected, and embracing it would pose a bit of a risk to your status in academic circles.

Throwing away a bad map

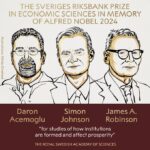

In the San Francisco Declaration on Research Assessment (DORA), editors, publishers, and scientists recommend against the use of journal-based metrics, such as the impact factor, as indicators of the quality of individual papers. Some of the signatories recently reported on DORA here. Scientists, such as open-access pioneer, Michael Eisen, recent Nobel laureate Randy Schekman and science blogger Dorothy Bishop have similarly been calling for impact factors to be ignored when the quality of research is assessed.

At the same time, however, almost every scientist relies on the metric (or the prestige it confers to a journal) when selecting what to read. “How do you choose what to read?” is one of the more embarrassing questions to drop on a scientist.

Despite its flaws, scientists will rely on the impact factor as long as they have no better indication of the reliability and importance of new scientific papers. When deciding which of two new papers to read (assuming they are equally relevant, and we don’t know the authors), most of us will prefer the one that appeared in the journal with the higher impact factor. Assessments of the overall scientific contribution of a scientist or department, similarly, rarely ignore this metric.

It is unrealistic to suggest that a committee deciding who to hire or fund should replicate the assessment of individual papers already performed by peer review. Such committees are typically under considerable time pressure. If they are to make a good decision, they will need to use all available evidence to estimate the quality of the work. The impact factor is unreliable. However, direct assessment of the applicant’s work will similarly be compromised by the limits of the committee’s time and expertise.

Given a choice between a bad map and no map at all, a rational person will choose the bad map. Asking people to ignore the only indication to the quality of recent scientific papers we currently have in favor of “judging by the content” is like saying that we shouldn’t choose what books to read without even having read them.

How to beat the impact factor

The only way to beat the impact factor is to provide a better evaluation signal for new scientific papers.

When a paper is published, it is read and judged in private by experts in the field who work on related questions. All we need to do to beat the impact factor is sample those expert judgements and combine them into numerical evaluations that reflect peer opinion on the reliability and importance of individual scientific papers. Such a process of open evaluation would provide ratings that are specific to each paper and combine a larger number of expert opinions than traditional peer review can. The process could also benefit from post-publication commentary.

An open evaluation system will need to be more complex than Facebook likes or product ratings on Amazon. We will need multiple rating scales, at least two: for reliability and importance. We will also need to enable scientists to sign their ratings with digital authentication. Signed judgments will be essential to ensure that the system is trustworthy and transparent. An average of even just a dozen signed ratings by renowned experts would almost certainly provide a better evaluation signal and could free us from our dependence on the impact factor.

Time for change

Scientific publishing is currently in a state of flux. Recent developments point in the right direction, although they do not go far enough. These include: Pubmed Commons, PLoS Open Evaluation, Altmetric, and a large number of new start-up companies, such as PubPeer, ScienceOpen, the Winnower, and many others. Eventually, we might want to consolidate the open evaluation process into a single system, which should ideally be publicly funded and entirely transparent.

The evaluation of scientific papers steers the direction of each field of science, and – beyond science – guides real-world applications and public policy. If papers had reliable ratings, science would progress with a surer step. Only findings found to be reliable and important by a broad peer evaluation process would be widely publicised, thus improving the impact of science on society.

The perceived importance of a scientific paper should reflect the deepest wisdom of the scientific community, rather than the judgments of three anonymous peer reviewers. It is time scientists took charge of the evaluation process. Open evaluation will mean a fundamental change of the culture of science toward openness, transparency, and constructive criticism. We are slowly realizing that the rules of the game are ultimately up to us, and taking on the creative challenge to change them.![]()

***

Nikolaus Kriegeskorte has edited a collection of visions for open evaluation and postpublication peer review. He frequently argues in favour of reform for scientific publishing. He has served in editorial roles for Frontiers and PLoS Computational Biology, and is on the editorial board of ScienceOpen. He receives funding from the UK Medical Research Council, the European Research Council, and the Wellcome Trust.