Have We Outsourced Impact Measures to Database Providers?

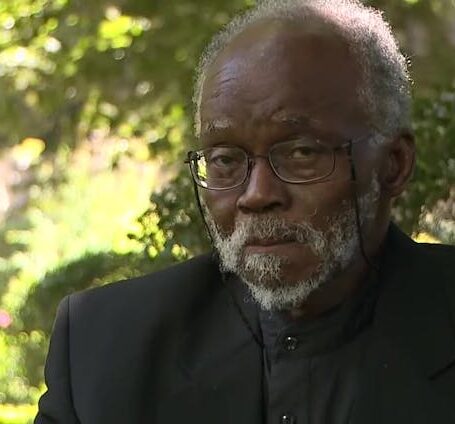

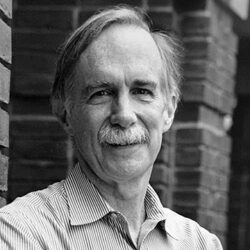

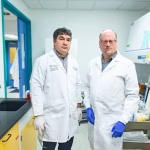

In our PLoS ONE article from last year we investigate to what extent research metrics have become established and accepted as legitimate ways to assess research performance. To do this we use a theoretical framework developed by Chicago sociologist Andrew Abbott. The purpose of our research is to offer a new perspective on the ongoing debate on research metrics.

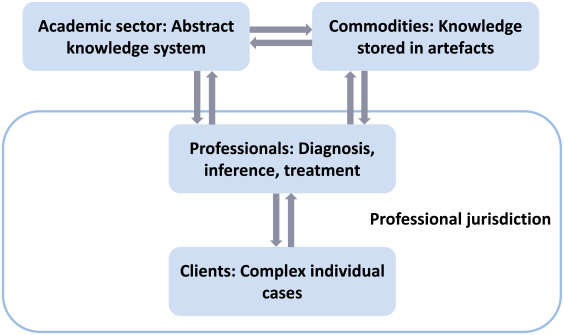

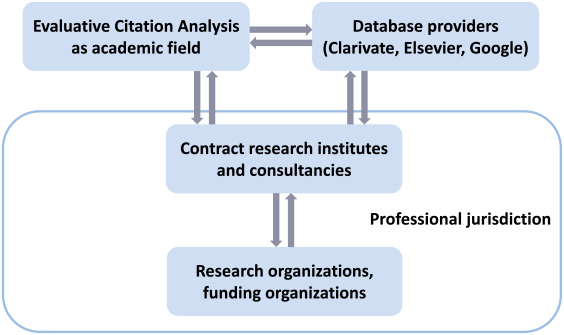

The sociology of professions is about how expertise is institutionalized in modern societies. It assumes a basic division of labor between an academic sector producing abstract knowledge and professional actors who apply this knowledge to complex individual cases (Figure 1). While the academic sector has the function to produce new knowledge, and to train novices to the profession, the job of professionals consists in serving individual clients. Abstract knowledge legitimizes professional work because it ties work for clients to the general values of logical consistency, rationality, effectiveness, and progress. Thus, scientific authority can be an important source of legitimacy in the competition among professional groups for exclusive domains of competence, so-called professional “jurisdictions.”

Applied to the field of evaluative citation analysis (ECA), two main types of clients are potentially interested in bibliometric assessment services: organizations conducting research, and research funders. In theory, the most important reason why such organizations would be interested in quantitative assessment techniques is the sheer growth of science. Since the knowledge base in most areas of science grows faster internationally than the financial resources of any individual organization, these organizations are routinely faced with resource allocation problems. Organizations have to make selection decisions on hiring and promotion of research staff, and when selecting research funding applications. The demand for routine assessment techniques that are relatively cheap and universally applicable thus seems understandable.

On the other hand, there are important reasons why research metrics are strongly contested within scientific communities. The main reason is that they pose a threat to the basic principle that only scientific colleagues from the same research area are competent judges of the merit of scientific contributions; the principle generally referred to as “peer review.” The exclusive reliance on evaluation by scientific colleagues who are at the same time competitors within the same field is called “reputational control.” This terminology goes back to sociologist Richard Whitley. While bibliometric indices are dependent on peer review because they build on peer-reviewed journal publications, the resulting impact metrics can be used by administrators, policymakers, or indeed anybody else without any specific understanding of the work to be evaluated. Therefore, bibliometric assessment is perceived by many scientists as an intrusion on basic mechanisms of reputational control and thus on their professional autonomy.

Faced with this tension between soaring demand for relatively objective performance assessment on one hand and fierce contestation on the other, the questions are how and to what extent particular assessment techniques have been established and why this has occurred in some national science systems but not in others. Yet our focus here is on the more general question of to what extent the academic research area of ECA confers legitimacy to research assessment practice. We argue that, contrary to what would be expected from Abbott’s theory, the academic sector has failed to provide scientific authority for research assessment as a professional practice. This argument is based on an empirical investigation of the extent of reputational control in ECA, an academic sector closely related to research assessment practice (Figure 2).

Abbott’s and Whitley’s ideas are used to derive three empirical propositions. First, the capability to legitimise expertise as professional work in competitive public and legal arenas depends on scientific legitimacy. Second, scientific legitimacy is a function of reputational control, as strong reputational control confers scientific legitimacy to a greater extent than limited reputational control. Third, the closure of academic fields to outsider contributions is a useful indicator of reputational control.

This blog post is based on the authors’ article, “Does bibliometric research confer legitimacy to research assessment practice? A sociological study of reputational control, 1972-2016,” published in PLoS ONE (DOI: 10.1371/journal.pone.0199031).

Based on all citation impact indices to have been proposed since the Journal Impact Factor (1972), we constructed inter-organizational citation networks from Web of Science publications citing these indices. We show that in these networks, peripheral actors contribute the same number of novel bibliometric indicators as central actors. In addition, the share of newcomers to the academic sector has remained high.

We conclude from these findings that, though ECA can be described as a research area within the broader category of library and information sciences, the growth in the volume of ECA publications has not been accompanied by the formation of an intellectual field with strong reputational control. This finding is relevant for understanding the present state of professionalization in bibliometric evaluation techniques. If reputational control were high, we would expect novel recommendations for improved research assessment to be launched by the field as a result of the recent intensification of research on this topic. Yet, in the present situation of limited reputational control, even carefully drafted academic contributions to improve citation analysis appear unlikely to have a significant impact on research assessment practice.

While we do not regard a strong professionalization of bibliometric expertise as a necessary or desirable development, what seems problematic is the powerful role of database providers in defining reference standards of research performance. Higher education rankings have been criticized on the grounds that non-experts have gained control over the definition of what constitutes excellent education. Clearly, the same argument applies to the definition of high-impact research as provided by Clarivate Analytics (WoS) and Elsevier (Scopus). We conclude that a growing gap exists between an academic sector with little capacity for collective action and increasing demand for routine performance assessment by research organizations and funding agencies. This gap has been filled by database providers. By selecting and distributing research metrics, these commercial providers have gained a powerful role in defining de facto standards of research excellence without being challenged by expert authority.