The Robot Will See You Now

Over half a century ago I obtained a computer program called ELIZA. It was a piece of software, probably what today we’d call an algorithm, or even a ‘chatbot,’ that emulated a non-directive, Rogerian therapist. Developed by Joseph Weizenbaum at MIT, it was essentially an early exploration of natural language processing by a computer. Weizenbaum claimed his purpose was to show how superficial is the communication between people and machines. I suspect he also wanted to show the limitations of Rogerian therapy and its mechanical distancing of the relationship between the patient and the therapist.

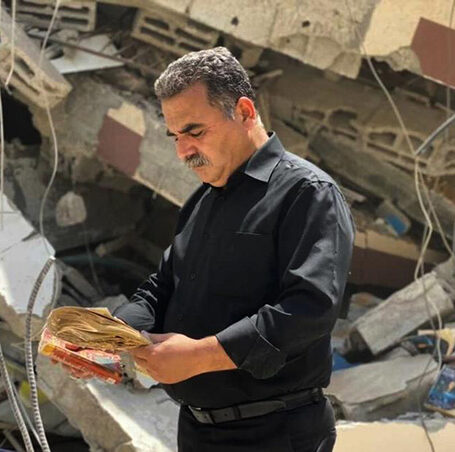

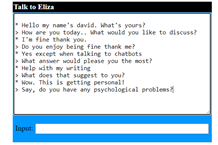

A typical conversation would be something like

In my research group we regarded it as an amusing game. Trying to see of we could trip ELIZA up or get ‘her’ to say something weird. But many people were convinced ELIZA was a real, if rather boring, person. Weizenbaum’s joke almost backfired in opening the way to ever more sophisticated forms or natural language human/computer interaction. You can’t get help from most websites these days without first going through some sort of ‘chat’ with an automated system. Voice recognition is now so sophisticated that spoken communication with machines is rather taken for granted.

The most powerful motor (to use an old-fashioned implicit metaphor) for these developments is what software engineers have very cleverly called ‘Artificial Intelligence.’ The anthropomorphic association couched within this label gives it a humanoid patina that ignores what it means for a human being to be intelligent. This has become apparent when it emerged that diagnostic health algorithms had built in biases present in the provision of health care. People from some ethnic groups, who suffer from limited health care support, were further disadvantaged by the data on which the software was built. That software simply enshrined what was already happening rather than making an ‘intelligent’ decision.

What is most ignored by computer scientists and software engineers in their promotion of automated systems and robots to replace human activities and endeavor is that who we are, what we think and believe and feel, as people, is integrated with our beings as living bodies that have grown up in contact with other humans. From the earliest days of phenomenology the emphasis has been on what one of that philosophy’s founders, Merleau-Ponty, called ‘embodiment.’ Our being as a physical organism, with all the constraints and possibilities that facilitates. Most importantly the consequence of interacting with other people who also experience the world in similar ways.

The COVID pandemic has served to highlight the importance of person-to-person contact. The rapid spread of the disease is a powerful illustration of how important it is for people to mix with others around the world. The profound loss people have felt when not being able to be freely face to face with others is a further illustration of how crucial this is for sane survival.

Intelligence goes far beyond ‘if-then’ algorithms and patterns in huge data sets. It comes from knowing what it is to be hurt and how to ease the pain of others. It is more that the ability to feign an understanding of natural language. It is to be a person.