New SSRC Project Aims to Develop AI Principles for Private Sector

Aligning artificial intelligence products with society’s objectives is impossible, according to the newest program of the New York-based Social Science Research Council, without corporate disclosure and auditing of the potentially substantial risks associated with AI.

Given this, the new program, the AI Disclosures Project, seeks to create structures that both recognize the commercial enticements of AI while ensuring that issues of safety and equity are front and center in the decisions private actors make about AI deployment. The project is led by technologist Tim O’Reilly, known for coining terms such as “open source” and “Web 2.0,” and economist Ilan Strauss.

In announcing the program, the SSRC laid out the terrain in which this ‘first, do no harm’ concept will lie:

“If we want prosocial outcomes,” O’Reilly has written, “ we need to design and report on the metrics that explicitly aim for those outcomes and measure the extent to which they have been achieved.”

Noting that companies have adopted frameworks in other areas, such as the Generally Accepted Accounting Principles or the International Financial Reporting Standards, O’Reilly added that the issue isn’t the lack of AI principles so much as it is their lack of specificity and general milquetoastery. “Today, when disclosures happen, they are haphazard and inconsistent, sometimes appearing in research papers, sometimes in earnings calls, and sometimes from whistleblowers. It is almost impossible to compare what is being done now with what was done in the past or what might be done in the future. … This is unacceptable.”

Through high-quality research, collaboration, and policy engagements, the SSRC project would develop a systematic disclosure and auditing framework that can become the basis for a set of “Generally Accepted AI Management Principles.” Given the business and economics orientation of the program leaders, the program will draw from the business community and its best practices and metrics instead of imposing a top-down set of principles. “Our goal,” according to SSRC, “is to learn from companies that are acting responsibly, and to use their best practices to shape disclosure standards for AI auditing and regulation that are informed by the commercial realities of AI markets.

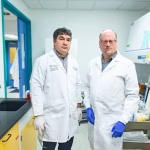

O’Reilly, the principal investigator and co-director of the project, is the founder, CEO, and chairman of O’Reilly Media, and a visiting professor of practice at the UCL Institute for Innovation and Public Purpose (IIPP). At the institute, he and Mariana Mazzucato oversaw a multi-year research project sponsored by the Omidyar Network that investigated Big Tech’s use of algorithmic allocations to extract rents from their ecosystems.

Strauss, the program director of the AI Disclosures Project, is an honorary senior fellow at IIPP, where he was head of digital economy research on a multi-year Omidyar Network-funded research project. He is also a visiting associate professor at the University of Johannesburg. Strauss was the joint recipient of an Economic Security Project grant investigating Big Tech’s acquisitions of technological capabilities.