Revisiting the ‘Research Parasite’ Debate in the Age of AI

A 2016 editorial published in the New England Journal of Medicine lamented the existence of “research parasites,” those who pick over the data of others rather than generating new data themselves. The article touched on the ethics and appropriateness of this practice. The most charitable interpretation of the argument centered around the hard work and effort that goes into the generation of new data, which costs millions of research dollars and takes countless person-hours. Whatever the merits of that argument, the editorial and its associated arguments were widely criticized.

Given recent advances in AI, revisiting the research parasite debate offers a new perspective on the ethics of sharing and data democracy. It is ironic that the critics of research parasites might have made a sound argument — but for the wrong setting, aimed at the wrong target, at the wrong time. Specifically, the large language models, or LLMs, that underlie generative AI tools such as OpenAI’s ChatGPT, have an ethical challenge in how they parasitize freely available data. These discussions bring up new conversations about data security that may undermine, or at least complicate, efforts at openness and data democratization.

The backlash to that 2016 editorial was swift and violent. Many arguments centered around the anti-science spirit of the message. For example, metanalysis – which re-analyzes data from a selection of studies – is a critical practice that should be encouraged. Many groundbreaking discoveries about the natural world and human health have come from this practice, including new pictures of the molecular causes of depression and schizophrenia. Further, the central criticisms of research parasitism undermine the ethical goals of data sharing and ambitions for open science, where scientists and citizen-scientists can benefit from access to data. This differs from the status quo in 2016, when data published in many of the top journals of the world were locked behind a paywall, illegible, poorly labeled, or difficult to use. This remains largely true in 2024.

The “research-parasites-are-bad” movement didn’t go very far. The importance of data democratization has been argued for many years and led to meaningful changes in the practice of science. Licensing options through Creative Commons have become standard for published research in many subfields, giving authors a way to state how they want their work to be used. This system includes options that lean toward data democracy like the CC BY licenses. Notably, several of these licenses allow content to be used for commercial use.

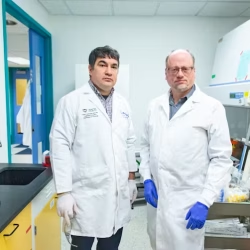

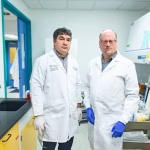

The COVID-19 pandemic was a watershed moment for data sharing. Within days, viral genomic sequences and clinical metadata could be shared around the world, allowing researchers to collaborate towards an understanding of an emerging threat. The associated movement to normalize preprint articles — papers released before undergoing peer review — allowed scientists and public health experts to share full reports in an open-access fashion, temporarily circumventing a peer-review process plagued by inefficiencies.

In light of this, the movement to democratize data seemed like it gained enough momentum to be emerging as a common practice. But history tells us that new technology is wont to complicate even the most established social and cultural norms. LLMs and other AI technologies have upended many different aspects of modern society. And with respect to data democracy, they are fomenting new reflections around the meaning of data ownership, as well as who can use freely available data, and to what end.

OpenAI and the company’s flagship tools clumsily travel along a line between openness and non-transparency. With regards to openness, a version of ChatGPT is freely available for anyone to use. In this sense, it is tough to levy the hard criticism that the tools directly reinforce a digital divide, as anyone with access to the web (an increasing fraction of everyone on Earth) can use them. But this ostensible accessibility is a distraction for the data practices — which are questionable, at best — that drive the technology. OpenAI is famously secretive about the anatomy of its tools: What data and information are being used to build these tools, and how are they being used? It is not hyperbole to suggest that we may never know.

Some of us who advocated the strongest for a data democracy now lament the use of freely available data in support of LLMs. There is nothing inherently contradictory in this position, as data democracy and data privacy need not clash. But I chuckle at how the data-libertarian ideas that I once espoused have been tweaked almost overnight by disruptive technology. The post-AI iteration of my stance is that yes, it does matter how data is used, and the authors of that data probably should have ways to protect what they produce and be remunerated if those productions are used for profit. This is a rich and complex area of ethics, and legal experts navigate this space regularly, recently in the form of several high-profile lawsuits.

The shape of the modern terrain provides an opportunity to reinvoke the research parasites debate from more than eight years ago. When considering the case being made against the non-transparent use of data to build LLMs, the word “parasitic” finds a more natural home. Even without leaning on a moral judgment, LLMs share features with biological definitions of parasitism. They don’t simply use the resources generated from the world but consume them without considering the context or intention of the person using or generating the data. The context-removal and reconfiguration of the world’s data is part of the reason why AI can hallucinate, espousing nonsense (or bullshit, more accurately) so readily. This is the stuff of the parasites that live in the biological world: Natural selection has wired parasites to extract resources (nutritional, energetic, reproductive, etc…). Evolution and ecology can constrain how this extraction happens — the mechanism often isn’t too conspicuous or costly to the host. Parasitism remains a ubiquitous lifestyle in nature because it is often effective. But the goal of the interaction from the parasite is rather direct: It extracts relevant resources for its own benefit.

The success of LLMs like ChatGPT is very similar. The machines will consume, slice, and repackage our freely available data and content for another purpose. But the differences between the biological inventions crafted by natural selection, and the cultural ones that feed these technology conglomerates are as important as their similarities. In the latter case, technology is the product of intentional actions by conscious actors, even if the technology itself isn’t conscious. Consequently, tech conglomerates cannot blame the laws of nature for dodgy practices.

The 2016 New England Journal of Medicine editorial that introduced the research parasite debate offered a concern about the use of data: “The first concern is that someone not involved in the generation and collection of the data may not understand the choices made in defining the parameters.” This is a silly complaint in practice — the reason why re-analysis of data is necessary is that the authors of the original studies might have made questionable decisions in the construction of experiments, data collection methods, and statistical reasoning. But the general complaint that blind usage of data, one that ignores why it was generated and what the conditions are, is a relevant point when discussing how our data is transformed into a product. And this analogy may help us steer public conversations about the line between data democracy and data parasitism. Instead of banally complaining that AI uses our data, we should focus our angst on the question of whether that usage is parasitic or not.

A scholarly ecosystem where data is available and usable by others makes for better science — and a better society. Such an ecosystem can help us study nature and engineer it towards health and wellness. But the parasite label arises when that usage removes or obfuscates the intentions of the author, towards a product that fills the pockets of people who may not care about us, or the reasons why we make what we make.

It’s becoming clear that with all the brain and consciousness theories out there, the proof will be in the pudding. By this I mean, can any particular theory be used to create a human adult level conscious machine. My bet is on the late Gerald Edelman’s Extended Theory of Neuronal Group Selection. The lead group in robotics based on this theory is the Neurorobotics Lab at UC at Irvine. Dr. Edelman distinguished between primary consciousness, which came first in evolution, and that humans share with other conscious animals, and higher order consciousness, which came to only humans with the acquisition… Read more »