Three Views on Addressing the ‘Reproducibility Crisis’

Message received, unfortunately.

Reproducibility is the idea that an experiment can be repeated by another scientist and they will get the same result. It is important to show that the claims of any experiment are true and for them to be useful for any further research.![]()

However, science appears to have an issue with reproducibility. A survey by Nature revealed that 52 percent of researchers believed there was a “significant reproducibility crisis” and 38 percent said there was a “slight crisis.”

The Conversation asked three experts how they think the situation could be improved.

Open Research is the answer

Danny Kingsley, head of the Office of Scholarly Communication, University of Cambridge

The solution to the scientific reproducibility crisis is to move towards Open Research – the idea that scientific knowledge of all kinds should be openly shared as early as it is practical in the discovery process. We need to reward the publication of research outputs along the entire process, rather than just each journal article as it is published.

This article originally appeared at The Conversation, a Social Science Space partner site, under the title “The science ‘reproducibility crisis’ – and what can be done about it”

As well as other research outputs – such as data sets – we should reward research productivity itself as well as the thought process and planning behind the study. This is why Registered Reports was launched in 2013, where researchers register the proposal and how the research will be conducted, before any experimental work commences. It allows editorial decisions to be based on the rigor of the experimental design and increases the likelihood that the findings could be replicated.

In the UK there is now a requirement from most funders that the data underpinning a research publication is made available. However, although there are moves towards open research, many argue against the sharing of data among the research community.

Researchers often write multiple papers from a single data set and many fear that if this data is released with the first publication then the researcher will be “scooped” by another research group, who will publish findings from similar data sets before the original authors get the chance to publish follow up articles – to gain maximum credit for the work. If the publication of data itself could be recorded as a “research output”, then being scooped would no longer be such an issue, as such credit will have been given.

One benefit of sharing data could be an improvement in its quality – as previous research has shown. And there have been small steps towards this goal, such as a standard method of citing data.

We also need to publish “null” results – those that do not support the hypothesis – to prevent other researchers wasting time repeating work. There are a few publication outlets for this, and a recent press release from ResearchGate indicated that it supports the sharing of failed experiments through its “project” offering. It lets users upload and track experiments as they are happening – meaning no one knows how they will turn out.

Psychology is leading the way out of crisis

Jim Grange, senior lecturer in psychology, Keele University

To me, it is clear that there is a reproducibility crisis in psychological science, and across all sciences. Murmurings of low reproducibility began in 2011 – the “year of horrors” for psychology – with a high profile fraud case. But since then, The Open Science Collaboration has published the findings of a large-scale effort to closely replicate 100 studies in psychology. Only 36% of them could be replicated.

The incentive structures in universities and the attitude that you “publish or perish” means that researchers prioritise “getting it published” over “getting it right”. It also means that some, implicitly or explicitly, use questionable research practices to achieve publication. These may include failing to report parts of data sets or trying different analytical approaches to make the data fit what you want to say. It could also mean presenting exploratory research as though it was originally confirmatory (designed to test a specific hypothesis).

However, many psychology journals now recommend or require the preregistration of studies which allow researchers to detail their predictions, experimental protocols, and planned analytical strategy before data collection. This provides confidence to readers that no questionable research practices have occurred.

Registered Reports has taken this further. But of course, once results are produced, isolated findings don’t mean much until they have been replicated.

I make efforts to replicate results before trying to publish and you’d be forgiven for thinking that replication attempts are common in science, but this is simply not the case. Journals seek novel theories and findings, and view replications as treading over old ground which offers little incentive for career-minded academics to conduct replications.

This has also led to the introduction of Registered Replication Reports in Perspectives on Psychological Science. This is where teams of researchers each follow identical procedures independently and aim to replicate important findings from the literature. A single paper then collates and analyses them to establish the size and reproducibility of the original study.

Although psychology is leading the way for improvements with these pioneering initiatives, it is certainly not out of the woods. But it has started to move beyond a crisis and make impressive strides – more disciplines need to follow suit.

This is a publication bias crisis

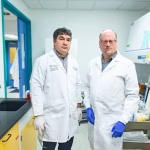

Ottoline Leyser, director of the Sainsbury Laboratory, University of Cambridge

Reproducibility is a fundamental building block of science. If two people do the same experiment, they should get the same result. But there are many good reasons why two “identical” experiments might not give the same result such as unknown differences that have not been considered – and some exciting discoveries have been made this way.

So if a lack of reproducibility is itself not necessarily a problem, why is everybody talking about a crisis? In some cases poor practice and corner cutting have contributed to lack of reproducibility, and there have been some high profile cases of out and out fraud. It’s a major concern, but what is causing it?

In 2014 I chaired a project on the research culture in Britain for the Nuffield Council on bioethics, which was motivated by concerns about research integrity including over-claiming, rushing prematurely to publication and incorrect use of statistics. The main conclusions were that poor practice is incentivised by hyper-competition with overly narrow rules for winning.

There is an excessive focus on the publication of groundbreaking results in prestigious journals. But science cannot only be groundbreaking, as there is a lot of important digging to do after new discoveries – but there is not enough credit in the system for this work and it may remain unpublished because researchers prioritise their time on the eye-catching papers, hurriedly put together.

The reproducibility crisis is actually a publication bias crisis which is driven by the reward structures in the research system. Various approaches have been suggested to address problems, such as pre-registration of experiments. However, the research landscape is highly diverse and this type of solution is only sensible for some research types. The most widely relevant solution is to change the reward structures. In the UK there is a major opportunity to do this by reforming the Research Excellence Framework (REF). Through the REF, public money is allocated to universities based on the “quality” of the four best research outputs, usually papers, produced by each of their principal investigators over approximately six years and it disproportionately rewards groundbreaking research.

We need reward for a portfolio of research outputs, including not only the headline grabbing results, but also confirmatory work and community data sharing, which are the hallmarks of a truly high quality research endeavor. This would go a long way to shifting the current destructive culture.